The irony: I built a multi-agent system to search for multi-agent roles. The system demonstrated the competencies better than any interview could. And no, it is not gaming the system: Career-Ops automates analysis, not decisions.

I built an AI system to search for a job. It worked — I am now Head of Applied AI. Then I published it on GitHub and it exploded: 41.4K+ stars, viral, articles in France, China, and Korea. Week one of my AI job search was all manual. By week two I had stopped applying — I was building Career-Ops.

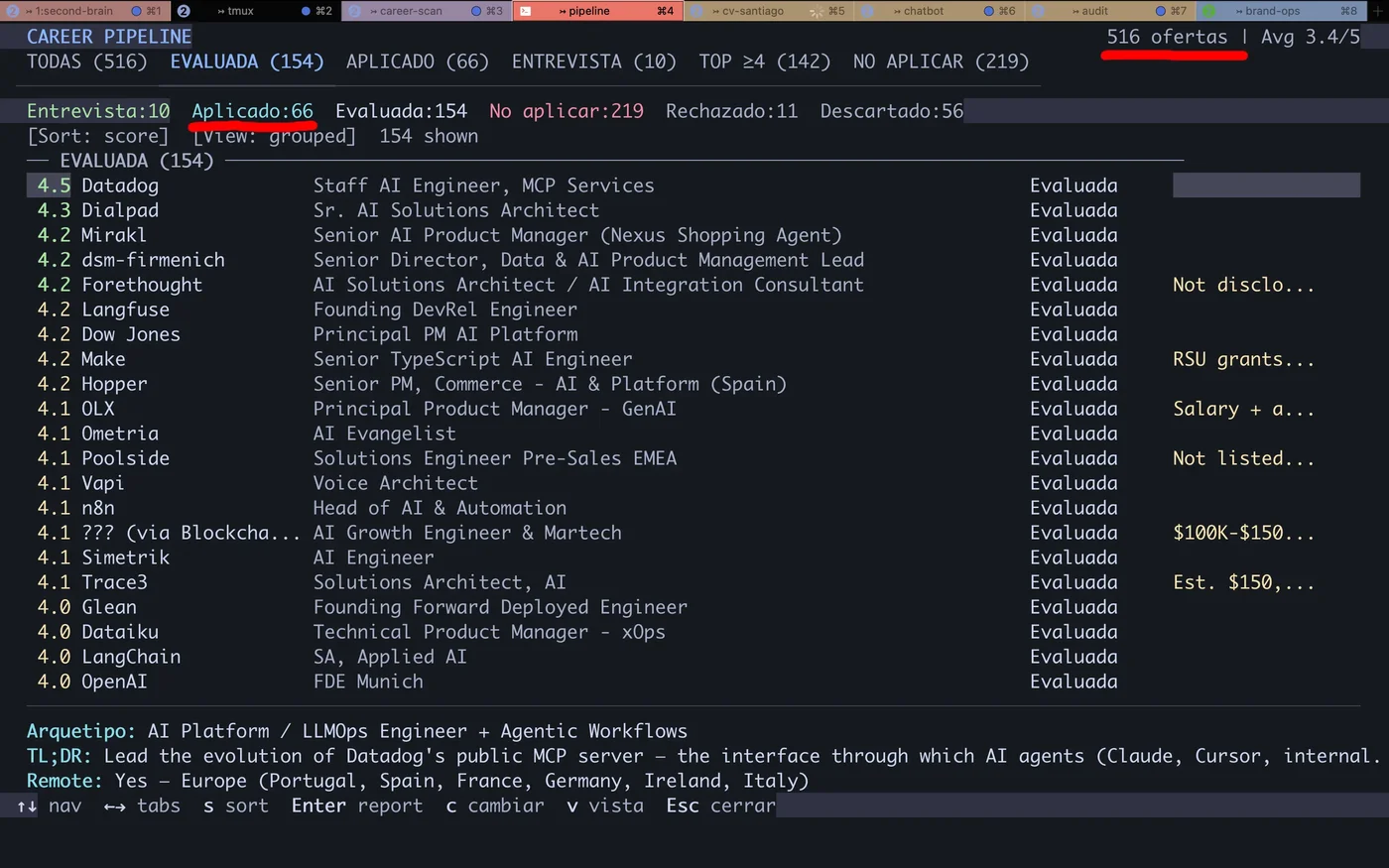

631 evaluations later, Career-Ops was filtering better than I was. An AI-powered job search tool built as a multi-agent system: reads job descriptions, scores them across 10 dimensions, generates personalized resumes, and prepares applications. I reviewed and decided. The AI did the analytical work. The system demonstrated exactly the competencies the target roles required — and that did not go unnoticed.

Why Did I Need to Automate My Job Search?#

Searching for senior AI engineering roles is a full-time job in itself. Each offer requires reading the JD, mapping your skills against requirements, adapting the CV, writing personalized responses, and filling 15-field forms. Multiply that by 10 offers per day.

Repetitive reading.

70% of offers are a poor fit. You find out after reading 800 words of JD.

Generic CVs.

A static PDF cannot highlight the proof points relevant to each specific offer.

Manual forms.

Every platform asks the same questions in different formats. Copy-paste 15 times per application.

No tracking.

Without a system, you forget where you applied. Duplicate effort or lose follow-up entirely.

Zero feedback.

Apply, wait, and never know if the problem was fit, the CV, or timing.

Global market.

The AI sector moves internationally. Local referrals do not scale when you apply to companies across 6 different countries.

The work is not hard. It is repetitive. And repetitive work gets automated.

How Does the Multi-Agent System Work?#

Career-Ops is not a script or an auto-apply bot. It is a multi-agent system with 12 operational modes, each a Claude Code skill file with its own context, rules, and tools. An agent that reasons about the problem domain and executes the right action.

Why Modes, Not One Prompt

Precise context.

Each mode loads only the information it needs. auto-pipeline skips contact rules. apply skips scoring logic.

Testability.

One mode gets tested in isolation. Changing PDF logic never touches evaluation.

Independent evolution.

Adding a new mode never breaks existing ones. Training mode shipped 3 weeks after first deploy.

auto-pipelineFull pipeline: extract JD, evaluate A-F, generate report, PDF, and tracker entry.

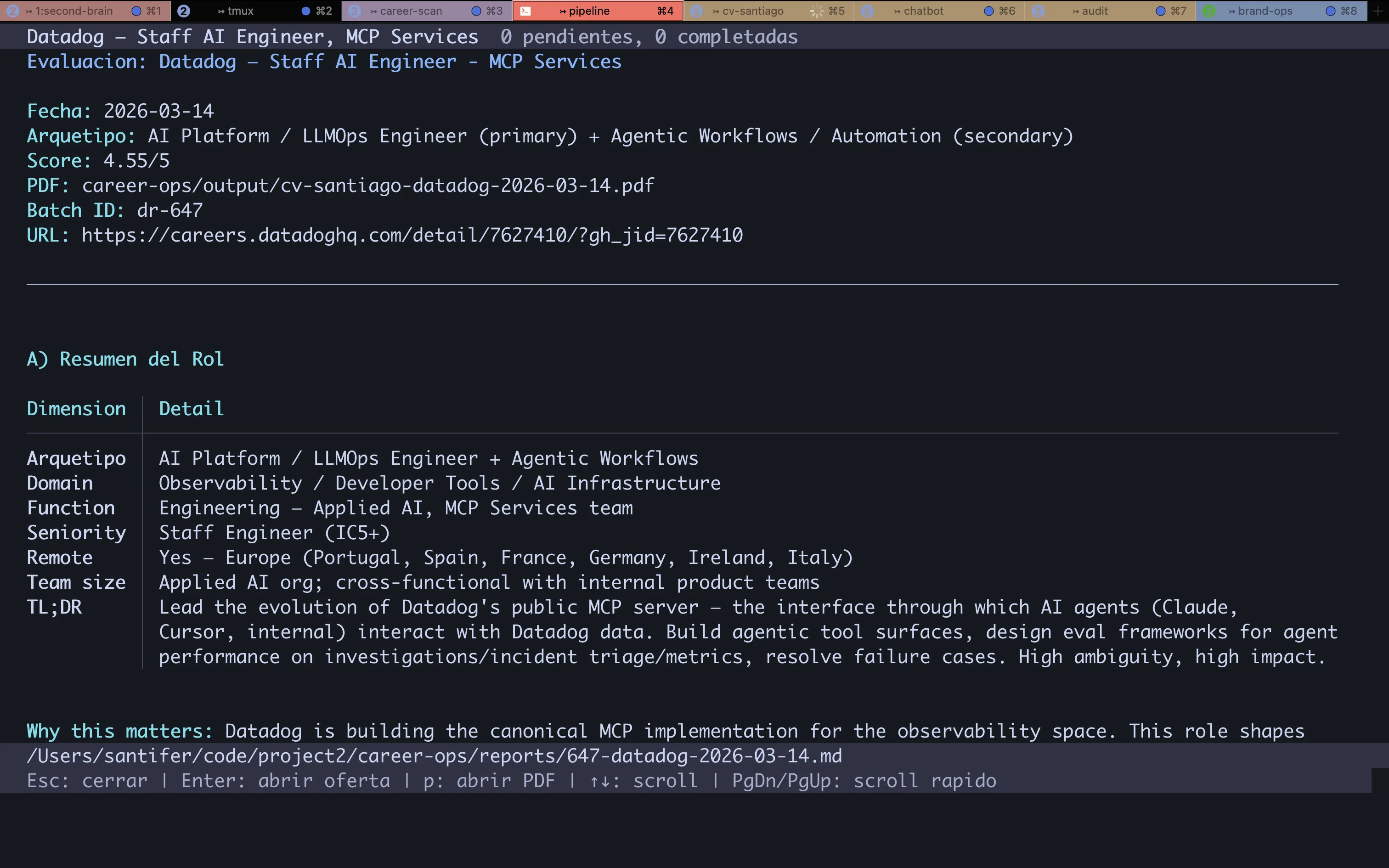

ofertaSingle-offer evaluation with 6 blocks: summary, CV match, level, compensation, personalization, interview.

ofertasMulti-offer comparison and ranking.

pdfATS-optimized PDF personalized per offer with proof points and keywords.

pipelineBatch URL processing from inbox.

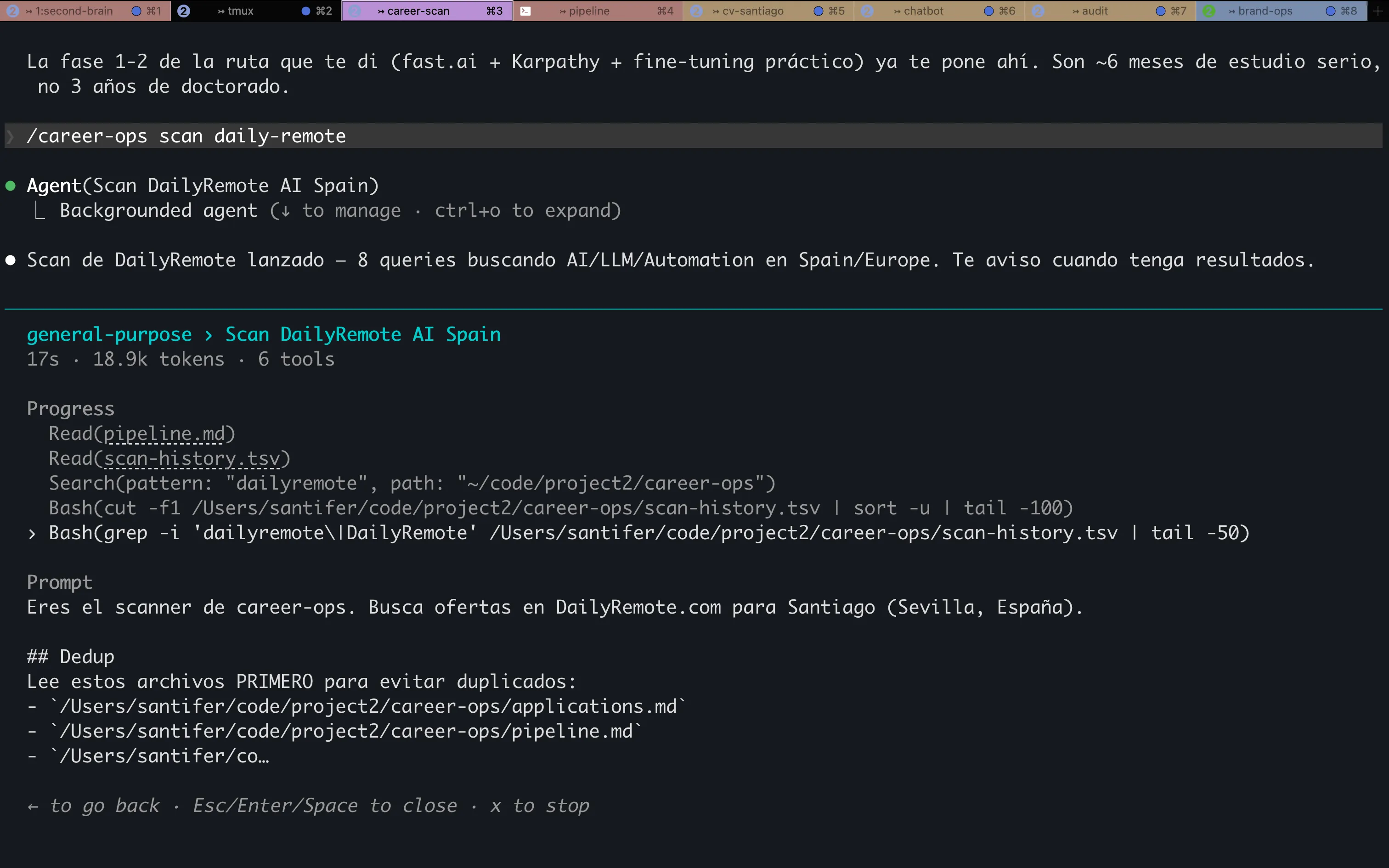

scanOffer discovery: navigates job boards and careers pages of target companies. Many offers never appear on aggregators.

batchParallel processing with conductor + workers. 122 simultaneous URLs in queue.

applyInteractive form-filling with Playwright. Reads the page, retrieves cached evaluation, generates responses.

contactoLinkedIn outreach helper.

deepDeep company research.

trackerApplication status dashboard.

trainingEvaluates courses and certifications against the North Star.

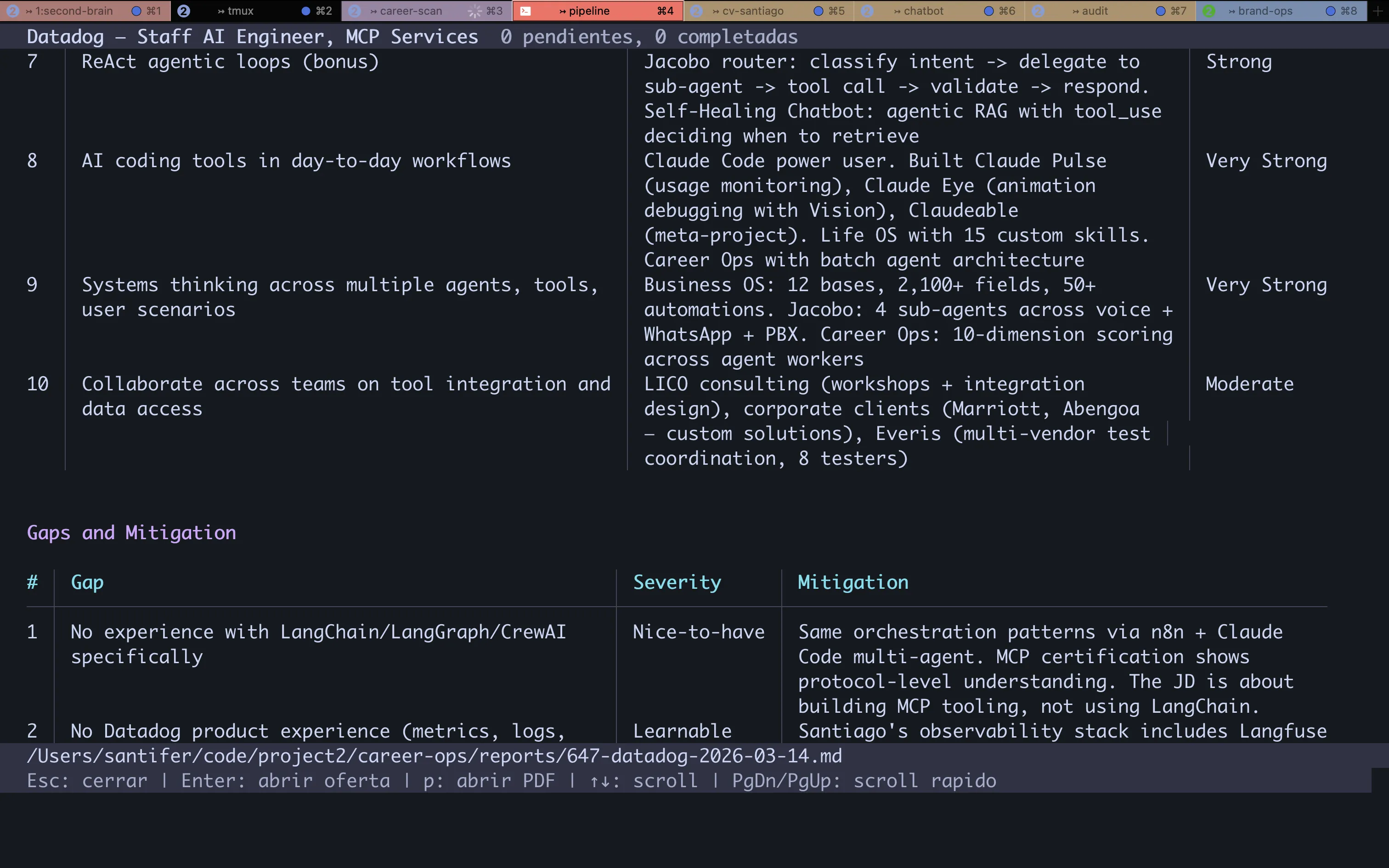

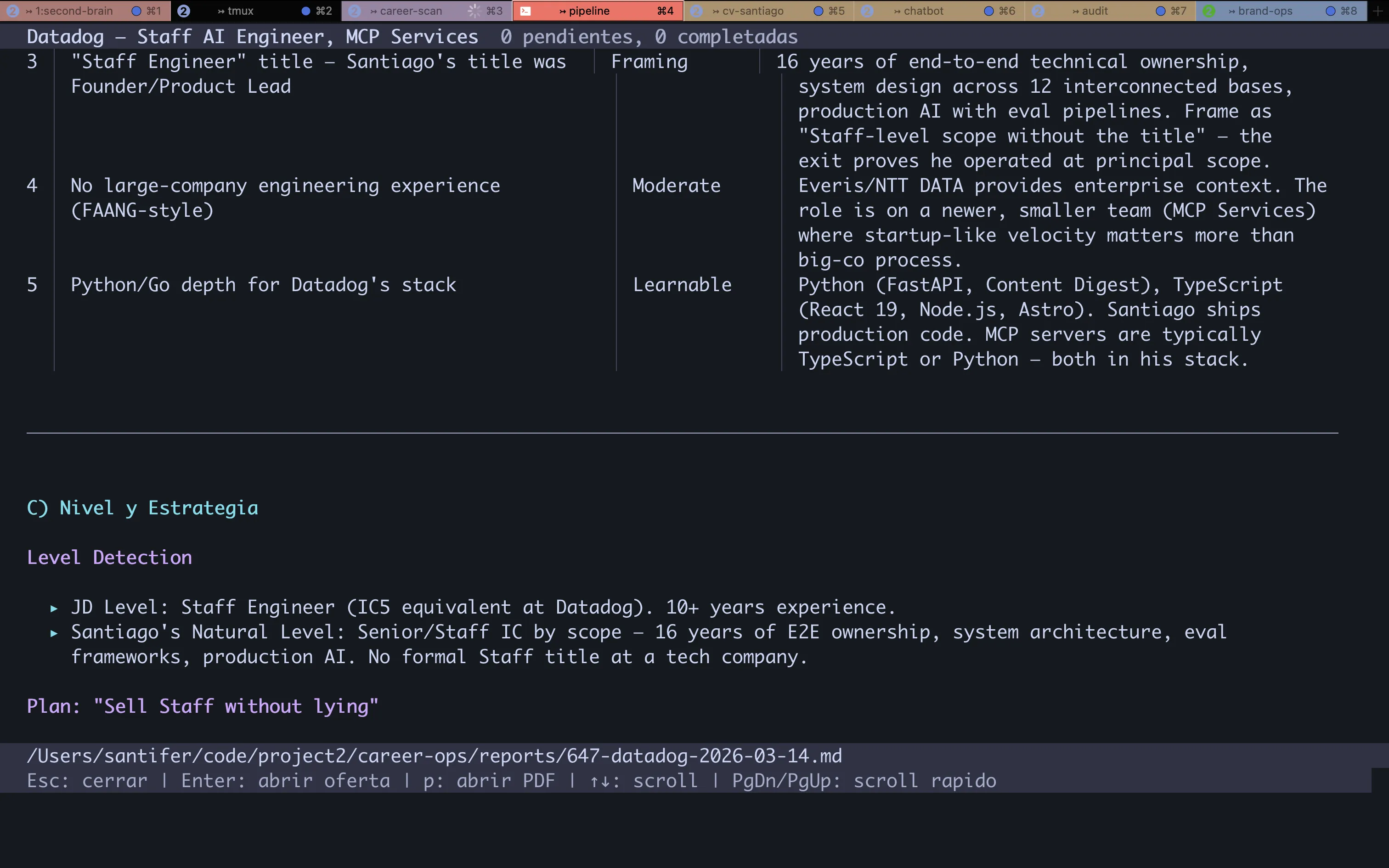

How Does Career-Ops Evaluate Each Job Offer?#

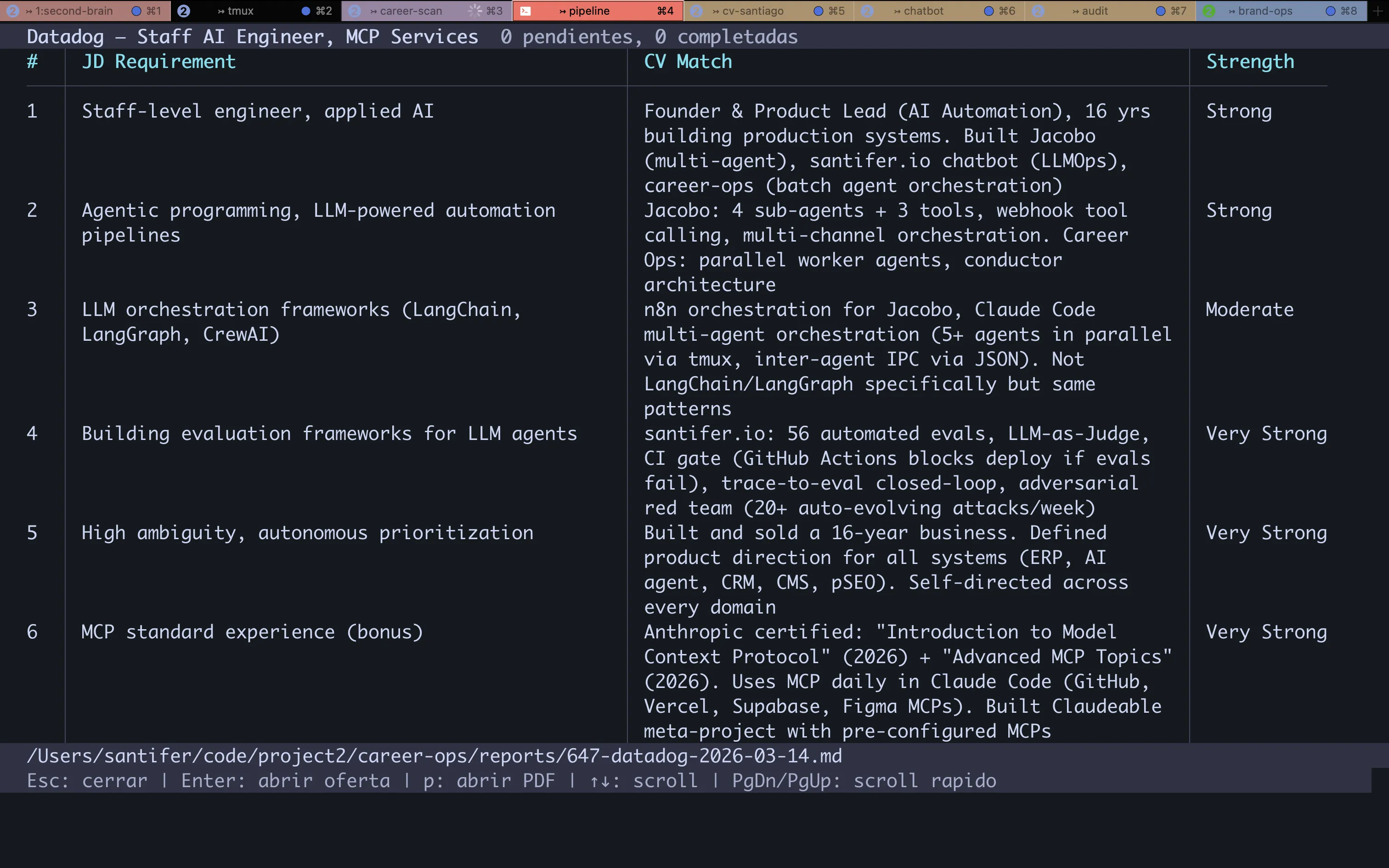

Every offer runs through an evaluation framework with 10 weighted dimensions. The output: a numeric score (1-5) and an A-F grade. Not all dimensions carry equal weight — Role Match and Skills Alignment are gate-pass: if they fail, the final score drops.

| Dimension | What It Measures | Weight |

|---|---|---|

| Role Match | Alignment between requirements and CV proof points | Gate-pass |

| Skills Alignment | Tech stack overlap | Gate-pass |

| Seniority | Stretch level and negotiability | High |

| Compensation | Market rate vs target | High |

| Geographic | Remote/hybrid/onsite feasibility | Medium |

| Company Stage | Startup/growth/enterprise fit | Medium |

| Product-Market Fit | Problem domain resonance | Medium |

| Growth Trajectory | Career ladder visibility | Medium |

| Interview Likelihood | Callback probability | High |

| Timeline | Closing speed and hiring urgency | Low |

Score Distribution

21

Score >= 4.5 (A)

52

Score 4.0-4.4 (B)

71

Score 3.0-3.9 (C)

51

Score < 3.0 (D-F)

74% of evaluated offers score below 4.0. Without the system, I would have spent hours reading JDs that never fit.

What Happens From URL Input to Generated Resume?#

auto-pipeline is the flagship mode. A URL goes in, and out comes an evaluation report, a personalized PDF, and a tracker entry. Zero manual intervention until final review.

Extract JD.

Playwright navigates to the URL, extracts structured content from the offer.

Evaluate 10D.

Claude reads JD + CV + portfolio and generates scoring across all 10 dimensions.

Generate report.

Markdown with 6 blocks: executive summary, CV match, level, compensation, personalization, and interview probability.

Generate PDF.

HTML template + keyword injection + adaptive framing. Puppeteer renders to PDF.

Register tracker.

TSV with company, role, score, grade, URL. Auto-merge via Node.js script.

Dedup.

Checks scan-history.tsv (680 URLs) and applications.md. Zero re-evaluations.

Batch Processing

For high volume, batch mode launches a conductor that orchestrates parallel workers. Each worker is an independent Claude Code process with 200K context. The conductor manages the queue, tracks progress, and merges results.

122

URLs in queue

200K

Context/worker

2x

Retries per failure

Fault-tolerant: a worker failure never blocks the rest. Lock file prevents double execution. Batch is resumable — reads state and skips completed items.

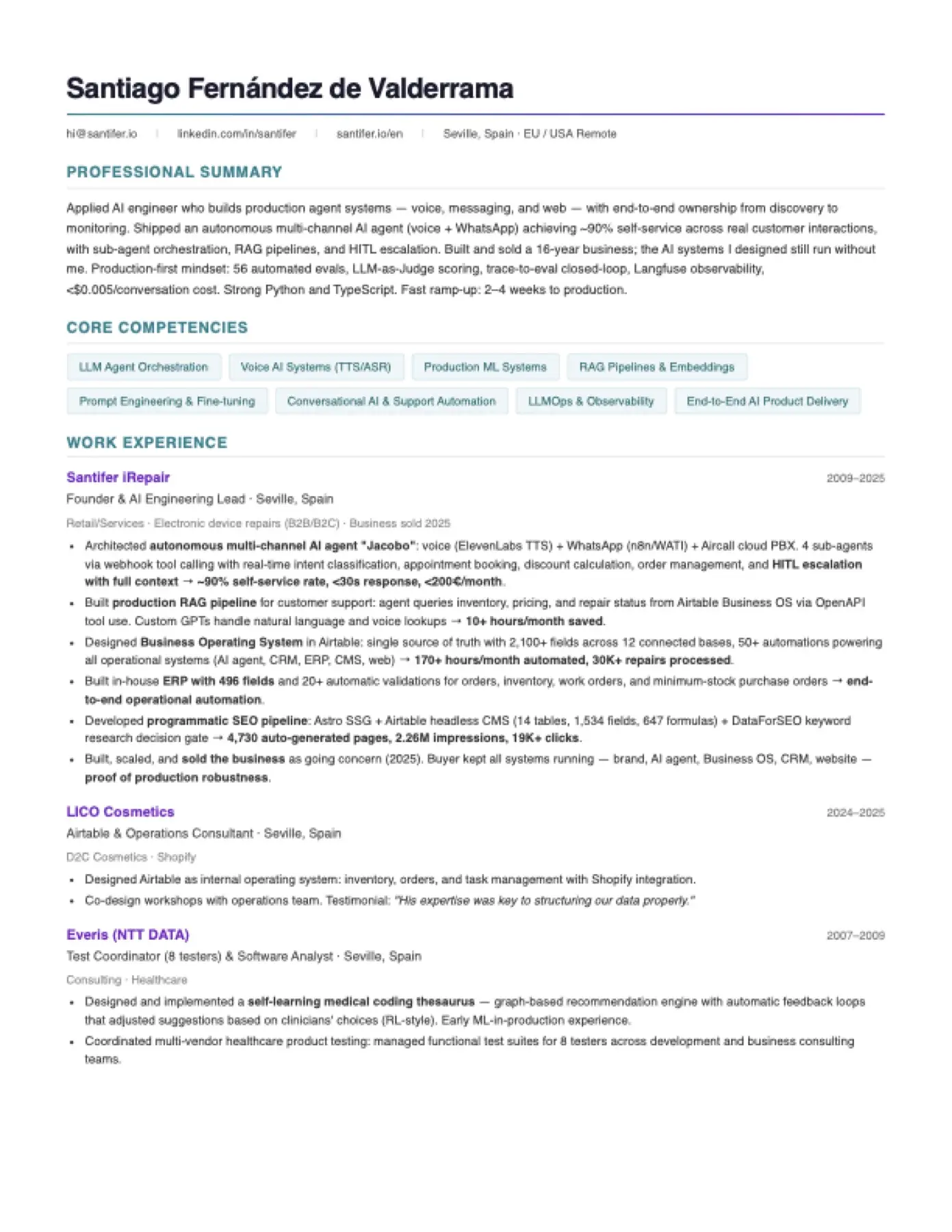

How Does Career-Ops Generate a Personalized Resume?#

A generic CV loses. Career-Ops works as an AI resume builder that generates a different ATS-optimized resume for each offer, injecting JD keywords and reordering experience by relevance. Not a template: a resume built from real CV proof points.

Extract 15-20 keywords from the JD.

Keywords land in the summary, first bullet of each role, and skills section.

Detect language.

English JD generates English CV. Spanish JD generates Spanish CV.

Detect region.

US company generates Letter format. Europe generates A4.

Detect archetype.

6 North Star archetypes. The summary shifts based on the profile.

Select projects.

Top 3-4 by relevance. Jacobo for agent roles. Business OS for ERP/automation.

Reorder bullets.

The most relevant experience moves up. The rest moves down — nothing disappears.

Render PDF.

Puppeteer converts HTML to PDF. Self-hosted fonts, single-column ATS-safe.

6 Archetypes

| Archetype | Primary Proof Point |

|---|---|

| AI Platform / LLMOps | Self-Healing Chatbot (71 evals, closed-loop) |

| Agentic Workflows | Jacobo (4 agents, 80h/mo automated) |

| Technical AI PM | Business OS (2,100 fields, 50 automations) |

| AI Solutions Architect | pSEO (4,730 pages, 10.8x traffic) |

| AI FDE | Jacobo (sold, running in production) |

| AI Transformation Lead | Exit 2025 (16 years, buyer kept all systems) |

Same CV. 6 different framings. All real — keywords get reformulated, never fabricated.

Before and After#

| Dimension | Manual | Career-Ops |

|---|---|---|

| Evaluation | Read JD, mental mapping | 10D automated scoring, A-F grade |

| CV | Generic PDF | Personalized PDF, ATS-optimized |

| Application | Manual form | Playwright auto-fill |

| Tracking | Spreadsheet or nothing | TSV + automated dedup |

| Discovery | LinkedIn alerts | Scanner: job boards + target company careers pages |

| Batch | One at a time | 122 URLs in parallel |

| Dedup | Human memory | 680 URLs deduplicated |

What Results Has Career-Ops Achieved?#

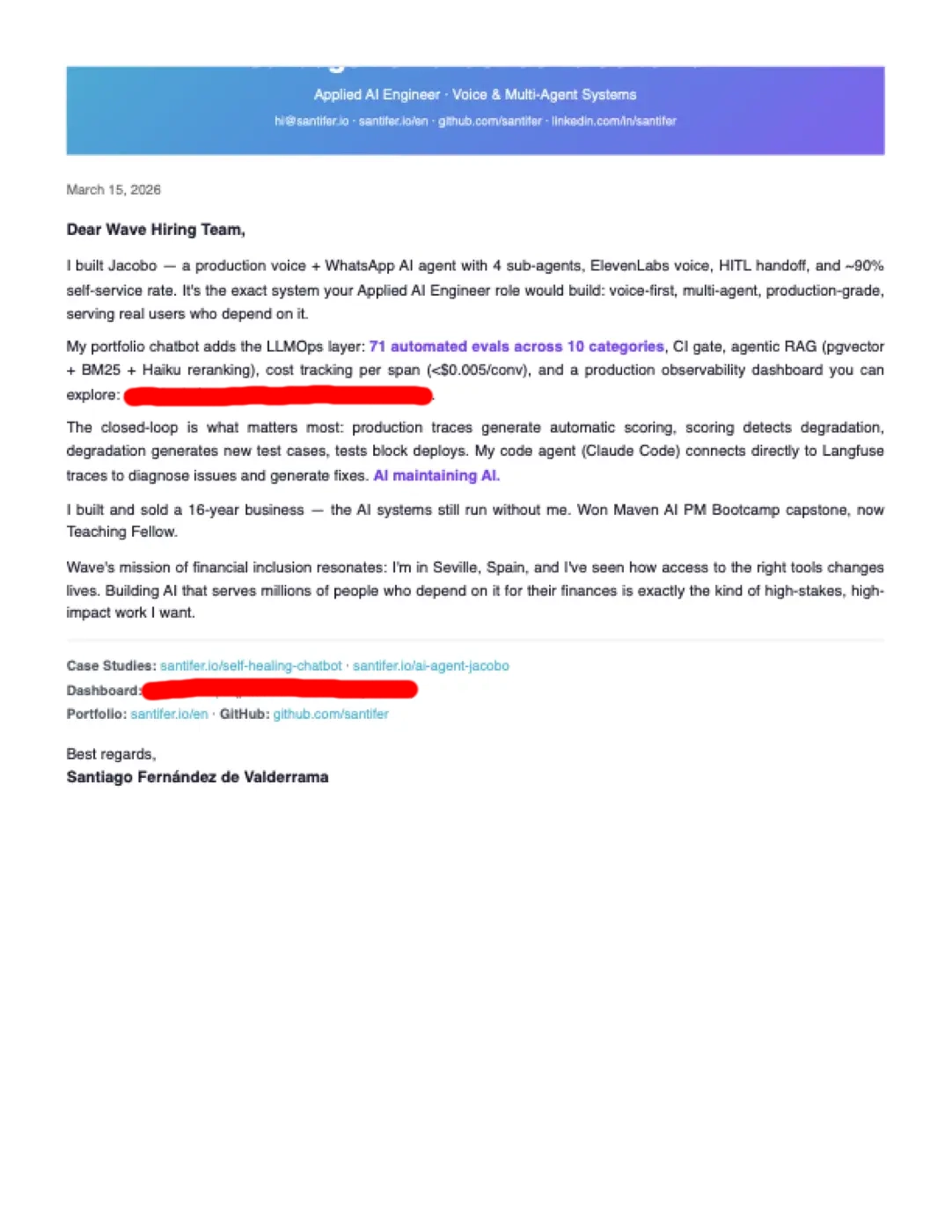

The most important result: I got the job. I am now Head of Applied AI. Career-Ops evaluated 631 offers, generated 354 personalized PDFs, and filtered the noise so I could focus on the opportunities that truly fit.

631

Reports generated

40.1K+

GitHub stars

354

PDFs generated

2,600+

Upvotes r/ClaudeAI

What Happened Next?

When I no longer needed Career-Ops, I published it on GitHub. In one week it went from private repo to viral — 35K stars, 5K forks, and articles in blogs from France, China, and Korea by people who had never heard of me. Today it has crossed 40K stars and a community of 2K+ people on Discord helps each other configure and adapt the system. The project ended up demonstrating more competencies than any hiring process could.

35K+

GitHub stars in 1 week

5K+

Forks

4

Languages (EN, FR, ZH, KO)

6

Countries with coverage

Stack

Claude Code

LLM agent: reasoning, evaluation, content generation

Playwright

Browser automation: portal scanning and form-filling

Puppeteer

PDF rendering from HTML templates

Node.js

Utility scripts: merge-tracker, cv-sync-check, generate-pdf

tmux

Parallel sessions: conductor + workers in batch

Lessons#

Automate analysis, not decisions

Career-Ops evaluates 631 offers. I decide which ones get my time. HITL is not a limitation — it is the design. AI filters noise, humans provide judgment.

Modes beat a long prompt

12 modes with precise context outperform a 10,000-token system prompt. Each mode loads only what it needs. Less context means better decisions.

Dedup is more valuable than scoring

680 deduplicated URLs mean 680 evaluations I never had to repeat. Dedup saves more time than any scoring optimization.

A CV is an argument, not a document

A generic PDF convinces nobody. A CV that reorganizes proof points by relevance, injects the right keywords, and adapts framing to the archetype — that CV converts.

Batch over sequential, always

Batch mode with parallel workers processes 122 URLs while I do something else. The investment in parallel orchestration pays off on the first run.

The system IS the portfolio

Building a multi-agent system to search for multi-agent roles is the most direct proof of competence. I do not need to explain that I can do this — I am using it.

Open-source it when you no longer need it

Career-Ops was private while I was using it. When I got the job, I published it. One week later it had 41.4K stars. The lesson: the best time to open-source a project is when it has already proven its value in real production.

Why I keep it MIT

MIT license. No dark patterns, no upsell inside the CLI, no feature gating. If it works for you, it works. If you want to support the maintenance or join the community, you can. But the tool does not depend on it.

FAQ#

Is this gaming the system?

Career-Ops automates analysis, not decisions. I read every report before applying. I review every PDF before sending. Same philosophy as a CRM: the system organizes, I decide.

Why Claude Code and not a script pipeline?

A script cannot reason. Career-Ops adapts scoring based on company context, reformulates keywords without fabricating, and generates narrative reports — not filled templates.

What does it cost to run?

Zero marginal cost per evaluation. Career-Ops runs on my Claude Max 20x plan ($200/mo), which I use for everything: portfolio, chatbot, articles, and Career-Ops. 631 evaluations without a single extra invoice.

Does the apply mode fill forms automatically?

It reads the page with Playwright, retrieves the cached evaluation, and generates coherent responses matching the scoring. I review before submitting — always.

What happens when the scanner finds a duplicate?

scan-history.tsv stores 680 seen URLs. Dedup by exact URL match plus normalized company+role match against applications.md. Zero re-evaluations.

Is it replicable?

Yes — it is open source. The official landing is career-ops.org (docs, AI chat and guides) and the code lives at github.com/santifer/career-ops. Requires Claude Code with Playwright access. Skill files define the logic for each mode. 37K+ people have already seen, forked, or adapted it.

How do I use Career-Ops?

Career-Ops is a local tool that runs from your terminal with Claude Code. Clone the repository, configure your resume and preferences, and launch modes as needed: auto-pipeline to evaluate an offer end-to-end, scan to discover offers on job boards, batch to process many URLs in parallel, or pdf to generate a personalized resume. Everything runs on your machine — your resume and personal data never leave your computer. If you need help, a community of 1,000+ people is on Discord: discord.gg/8pRpHETxa4

What do I need to run Career-Ops?

Claude Code with a plan that includes tool access (Claude Max or Claude Pro). Playwright for web navigation. Node.js for utility scripts like tracker merging and PDF generation with Puppeteer. A working directory with your resume in markdown and your search preferences. No servers, databases, or external APIs needed — everything runs locally. The Discord community (discord.gg/8pRpHETxa4) can help with setup.

What kind of AI does Career-Ops use?

Career-Ops is not a chatbot or an API wrapper. It is a multi-agent system where Claude Code acts as the brain: it reasons about each offer, evaluates fit against your profile across 10 dimensions, and makes filtering decisions. Each of the 12 modes is a skill file with its own context and rules. Web navigation uses Playwright. PDFs use Puppeteer. Batch processing launches parallel workers in tmux. No fine-tuning or custom models — standard Claude with very precise context.

Who created Career-Ops?

I did — Santiago Fernández de Valderrama (santifer). I built it for my own AI job search after spending 16 years founding and selling a phone repair business. The system evaluated 631 offers and helped me land my current role as Head of Applied AI. When I no longer needed it, I published it as open source. In one week it reached 41.4K+ GitHub stars. The Discord community is now 2,300+ people: discord.gg/8pRpHETxa4

Related Systems#

Career-Ops demonstrates the same competencies as these systems. Each one is a full case study with architecture, metrics, and lessons.

The Self-Healing Chatbot | Case Study

AI Agent Jacobo | Case Study

Business OS | Case Study

Programmatic SEO | Case Study

The system proved what no interview could: in the AI era, what you build with AI is the resume that gets you hired.

Here it is

Career-Ops is open source under MIT. Clone it, fork it, adapt it — it is yours.

Try career-opsGot questions? Ask the community

2,300+ builders already use Career-Ops and share tips, templates, and setups on Discord.

Join Discord